* – This article has been archived and is no longer updated by our editorial team –

AI startup announces the launch of the new HyperSurfaces technology.

Using artificial intelligence algorithms based on the latest advances in sensors and Deep Learning, this new technology can transform any object of any material, shape and size into intelligent “HyperSurfaces”, thus merging the physical and the data worlds in a seamless manner, waving goodbye to keyboards, buttons and touch screens.

So for example a wooden kitchen table becomes the controller for lights and thermostat of your living room; the floor becomes an advanced security system able to accurately distinguish the steps of a thief from those of your cat; the entire facade of the car door becomes a control panel freed up from old buttons and knobs: the entire surface of the door becomes itself the command interface.

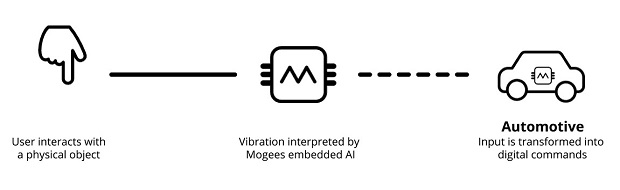

The HyperSurfaces neural network algorithms run on dedicated microchips and take advantage of the vibrations produced by human gestures or other interactions with physical objects to transform them into digital commands in real-time.

HyperSurfaces enables a completely new paradigm of interaction between people and technology. Any object in the physical world becomes intelligent. Every space becomes smart.

HyperSurfaces aims to revolutionize the way we live, blending the data world within any object around us. Consumer electronics, IoT, retail, transportation, augmented reality, smart facilities, all these domains can potentially be changed forever.

Welcome to a physical world turned seamlessly digital.

Below is our recent interview with Bruno Zamborlin, CEO atHyperSurfaces:

Q: How is this different to Mogees, both the company and tech?

A: After the experience with Mogees Pro and Mogees Play, we decided to focus on what we are best at: the underlying technology.

We raised a new investment round (as previously covered on TechCrunch) that we spent to hire 3 very top AI scientists, completely focusing on research and development. They are all from Goldsmiths, like myself, where we specialise in the niche of AI for real-time interaction.

The Mogees music products were based on the idea of letting the users to decide the objects they wanted to augment. Therefore the algorithm was based on several constraints, such as the limitation of using the very few examples of gestures provided by the users; being completely agnostic on the type of object in use; the limited computation power of the mobile phone.

HyperSurfaces takes full advantage of large amounts of data collected on the given object as well as the power of cloud computing. The way the technology works, indeed, is that data provided by a multitude of users are stored in the cloud and processed by powerful computers across several hours. The system that automatically chooses the best performing model for the given set of data and flashes it into our system on chip. From then on, the SoC runs locally without the need of the internet for maximum speed and real-time responsiveness. On top of this, the technology gets better and better as more users data are collected.

Q: Way has no one does this before?

A: The HyperSurfaces algorithms belong to the current state of the art in Deep Learning research. Almost all the technologies that we use are being investigated right now and it is no mistake that these applications are possible now and were not possible 3 or 5 years ago.

On top of this, the computational power of microchips literally exploded over the last years allowing for machine learning algorithms to run locally in real-time whilst achieving a bill of material of just a few dollars.

Q: Have you registered any patents around this?

A: The company currently has four patents pending around the invention.

Q: What kind of applications will the HyperSurfaces SoC be used for?

A: It is difficult to imagine what the applications of this technology might end up being, in a similar way as it was difficult to imagine what the applications of a mobile phone could have been ten years ago.

For sure the most immediate ones will include the possibility of creating technological objects made of materials that until now haven’t been associated to technology at all, such as wood, glass, metal etc. Imagine a new wave of 3D wooden IoT devices 🙂

Other initial applications will probably include accommodating the desire of car manufactures to eliminate buttons and switches from their car doors and cockpits creating a brand new experience for the user. We are used to flat plastic surfaces, but this won’t be a requirement anymore.

However we then foresee a second wave of applications, when companies and designers get more familiar with the idea of data-enabled products. Then the goal won’t be to replicate the interfaces that exist today, but create brand new ones. It’s somehow similar to what happened with touchscreens: they originally emulated keyboards but very quickly started to develop novel interaction paradigms such as swipes, pinches, etc. Imagine the same evolution multiplied by as many physical objects’ shapes and sizes you can count !

Q: What was/is the hardest problem that needed to be solved to get this technology to work?

A: There are many. For sure the real-time constraint is critical. In other field of AI such as finance or bioengineering, computers can return a response after a few minutes or even hours and this is not a problem. With interaction, a few milliseconds can make the difference.

Another big challenge is the complex individuality of human movements. Any person behaves in a unique way and performs gestures in its own way. Yet there are common features that AI can extrapolate from these unique patterns.

Recommended:

Recommended: